CoreDNS (kube-dns) Resolution Truncation Issue on Kubernetes (Amazon EKS)

This article describes a DNS resolution issue that can occur on Kubernetes. In particular, the issue can appear when a Pod relies on CoreDNS (kube-dns) to resolve records that return large DNS responses, such as Amazon ElastiCache endpoints with many nodes.

Note: to explain the details, I used Amazon EKS with Kubernetes version 1.5 and CoreDNS (v1.6.6-eksbuild.1) as the example environment.

Another note before diving into the remedies: this article focuses on one specific failure mode, DNS response truncation. It does not mean every DNS failure on EKS or Kubernetes should be solved by switching CoreDNS to TCP. In modern environments, especially when the upstream path includes hybrid DNS forwarding such as Route 53 Resolver outbound endpoints or on-premises DNS servers, moving more DNS traffic to TCP may simply move the bottleneck to another layer. Therefore, treat the suggestions below as troubleshooting options with trade-offs, not universal defaults.

What’s the problem

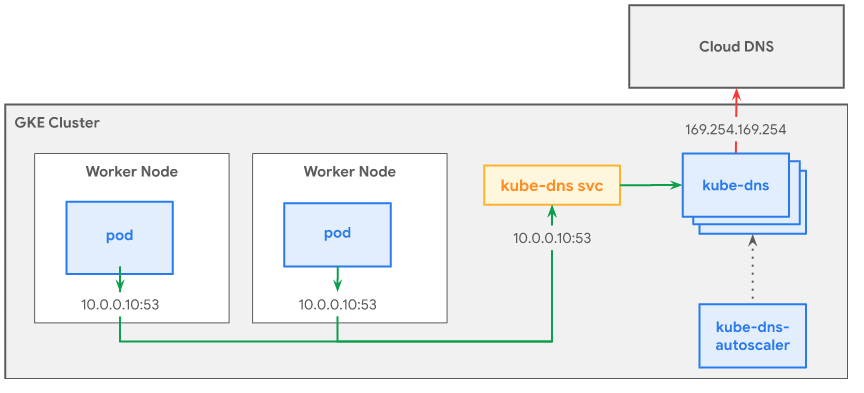

Kubernetes adds an extra DNS resolution layer for service discovery. As a result, containerized applications running in a cluster usually depend heavily on the cluster DNS service, which is commonly exposed through the kube-dns add-on.

In most environments, CoreDNS is deployed as the default DNS provider inside the cluster.

Pods can use automatically generated service names that map to a Kubernetes Service IP (ClusterIP), such as my-svc.default.cluster.local. This makes internal service discovery much more flexible because applications can use stable hostnames instead of hard-coded private IP addresses. The kube-dns add-on can also resolve external DNS records, which allows Pods to access services outside the cluster.

Symptom

In most common cases, everything works fine and you may never notice a problem in production. However, if your application needs to resolve a record with a large DNS response payload, for example an ElastiCache endpoint that returns many records, you may find that the application never resolves the target correctly.

You can install dig in a Pod and compare query results to narrow down the problem:

# Query an external DNS provider first

#

# Make sure the Pod can reach the Internet.

# Otherwise, replace 8.8.8.8 with a reachable private DNS server.

$ dig example.com @8.8.8.8

# Query CoreDNS directly

$ dig example.com @<YOUR_COREDNS_SERVICE_IP>

$ dig example.com @<YOUR_COREDNS_PRIVATE_IP>

# Query another record to compare behavior

$ dig helloworld.com @<YOUR_COREDNS_PRIVATE_IP>

For example, assume the following CoreDNS Pods and kube-dns Service are running in the cluster:

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

kube-system pod/coredns-9b6bd4456-97l97 1/1 Running 0 5d3h 192.168.19.7 XXXXXXXXXXXXXXX.compute.internal <none> <none>

kube-system pod/coredns-9b6bd4456-btqpz 1/1 Running 0 5d3h 192.168.1.115 XXXXXXXXXXXXXXX.compute.internal <none> <none>

NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

kube-system service/kube-dns ClusterIP 10.100.0.10 <none> 53/UDP,53/TCP 5d3h k8s-app=kube-dns

I can then use commands like the following to identify where the failure occurs:

dig example.com @10.100.0.10

dig example.com @192.168.19.7

dig example.com @192.168.1.115

dig helloworld.com @10.100.0.10

dig helloworld.com @192.168.19.7

dig helloworld.com @192.168.1.115

If the lookup fails through both CoreDNS Pod IPs, while other records still work when using CoreDNS as the nameserver, it strongly suggests that CoreDNS is having trouble forwarding queries for a specific target. That is the scenario discussed in this article. However, if the CoreDNS Pod IP works while the Service IP (ClusterIP) fails, or if neither path works consistently, then the investigation should focus on Kubernetes networking components such as the CNI plugin, kube-proxy, cloud provider settings, or anything else that could break Pod-to-Pod communication or host-level networking.

How to reproduce

In my test environment, I was running an Amazon EKS 1.5 cluster with the default components, including CoreDNS, the AWS CNI plugin, and kube-proxy. The issue can be reproduced with the following steps:

$ kubectl create svc externalname quote-redis-cluster --external-name <MY_DOMAIN>

$ kubectl create deployment nginx --image=nginx

$ kubectl exec <nginx>

$ apt-get update && apt-get install dnsutils && nslookup quote-redis-cluster

My deployment had one nginx Pod and two CoreDNS Pods already running in the EKS cluster:

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

default pod/nginx-554b9c67f9-w9bb4 1/1 Running 0 40s 192.168.70.172 XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX.compute.internal <none> <none>

...

kube-system pod/coredns-9b6bd4456-6q9b5 1/1 Running 0 3d3h 192.168.39.186 XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX.compute.internal <none> <none>

kube-system pod/coredns-9b6bd4456-8qgs8 1/1 Running 0 3d3h 192.168.69.216 XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX.compute.internal <none> <none>

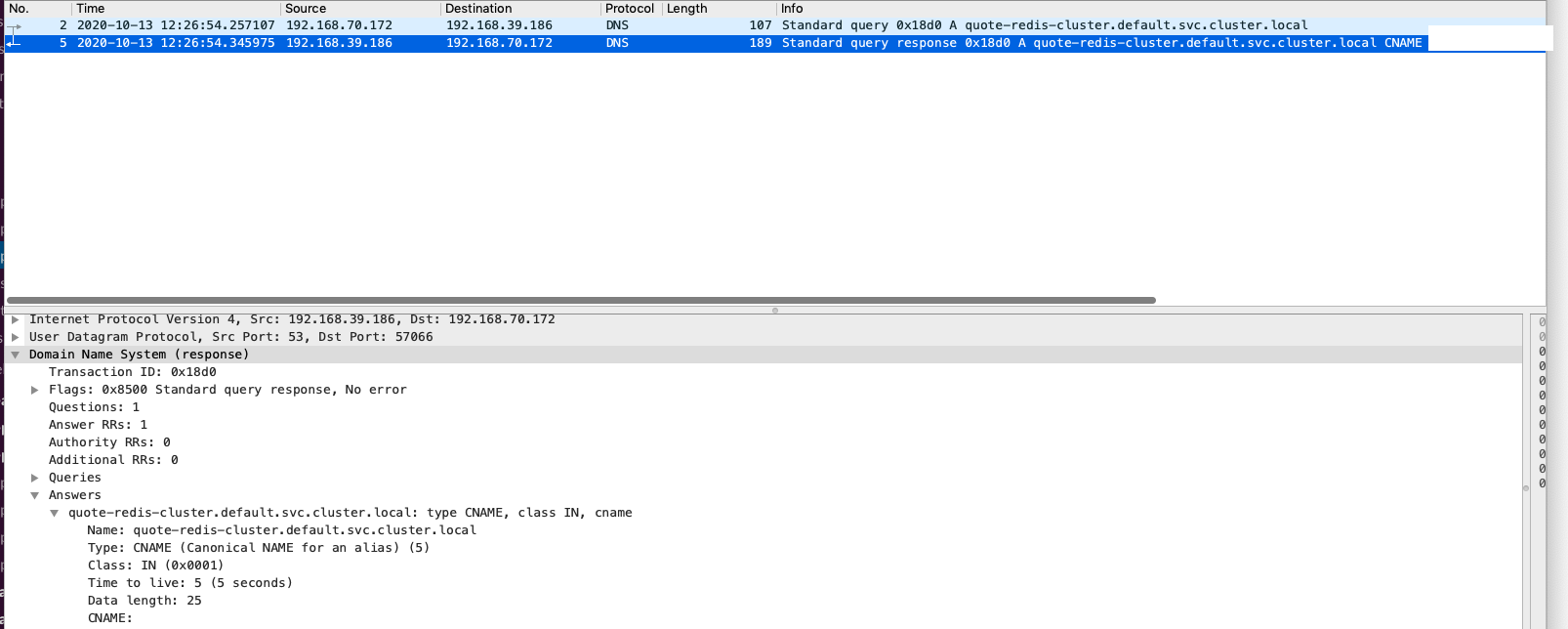

Here is the problem: when using nslookup to resolve the domain and pointing directly to the CoreDNS Pod IP 192.168.39.186 instead of the kube-dns ClusterIP, we can largely exclude Kubernetes Service routing issues such as iptables-based forwarding.

The result only returns the canonical name (CNAME), without any IP addresses for example.com:

$ kubectl exec -it nginx-554b9c67f9-w9bb4 bash

root@nginx-554b9c67f9-w9bb4:/# nslookup quote-redis-cluster 192.168.39.186

Server: 192.168.39.186

Address: 192.168.39.186#53

quote-redis-cluster.default.svc.cluster.local canonical name = example.com.

Note: the domain name example.com has a normal A record set with many IP addresses. In the real case, example.com was an Amazon ElastiCache endpoint that returned the IP addresses of multiple ElastiCache nodes.

However, when testing another domain from the same container with the same DNS resolver (192.168.39.186), nslookup can successfully return IP addresses:

$ kubectl create svc externalname quote-redis-cluster --external-name success-domain.com

$ kubectl exec -it nginx-554b9c67f9-w9bb4 bash

root@nginx-554b9c67f9-w9bb4:/# nslookup my-endpoint 192.168.39.186

Server: 192.168.39.186

Address: 192.168.39.186#53

my-endpoint.default.svc.cluster.local canonical name = success-domain.com.

Name: success-domain.com.

Address: 11.11.11.11

Name: success-domain.com.

Address: 22.22.22.22

And this is the issue I would like to talk about. Let’s break down and understand why having difference in the result.

Deep dive into the root cause

Let’s start to break down what happen inside. To better help you understand what’s going on, it is required to know:

192.168.70.172(Client - nslookup): The nginx Pod, in this Pod I was runningnslookupto test the DNS resolution ability.192.168.39.186(CoreDNS): The real private IP of CoreDNS Pod, play as DNS resolver forkube-dnsservice.192.168.69.216(CoreDNS): The real private IP of CoreDNS Pod, play as DNS resolver forkube-dnsservice.192.168.0.2(AmazonProvidedDNS): The default DNS resolver in VPC and can be used by EC2 instances.

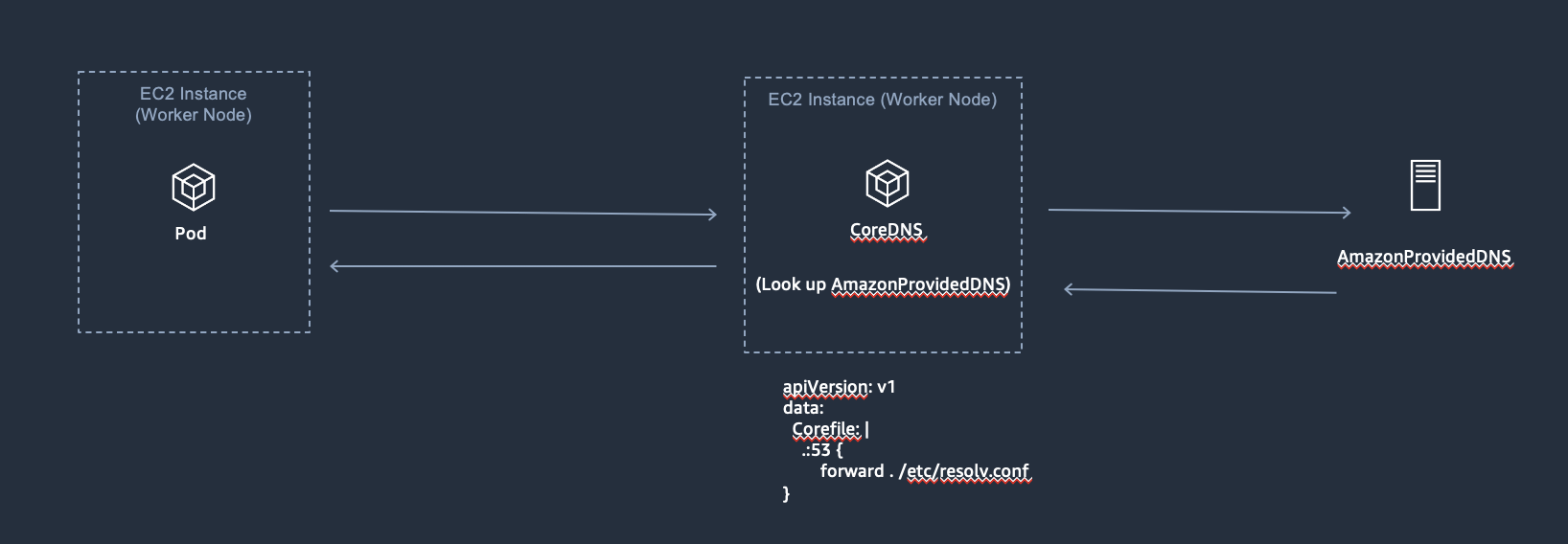

On EKS, the DNS resolution flow can be looked like:

First, the Pod will issue a DNS query to CoreDNS. Once CoreDNS receives the query, it checks whether a cached DNS record exists. Otherwise, it follows the configuration to forward the request to the upstream DNS resolver.

The following is that CoreDNS will look up the DNS resolver according to the configuration /etc/resolv.conf of CoreDNS node(In my environment it was using AmazonProvidedDNS):

apiVersion: v1

data:

Corefile: |

.:53 {

forward . /etc/resolv.conf

...

}

Based on the model, responses will follow the flow and send back to the client. The normal case(happy case) is that we always can query DNS A records and can correctly have addresses in every response:

Right now, let’s move on taking a look what’s going on regarding the issue:

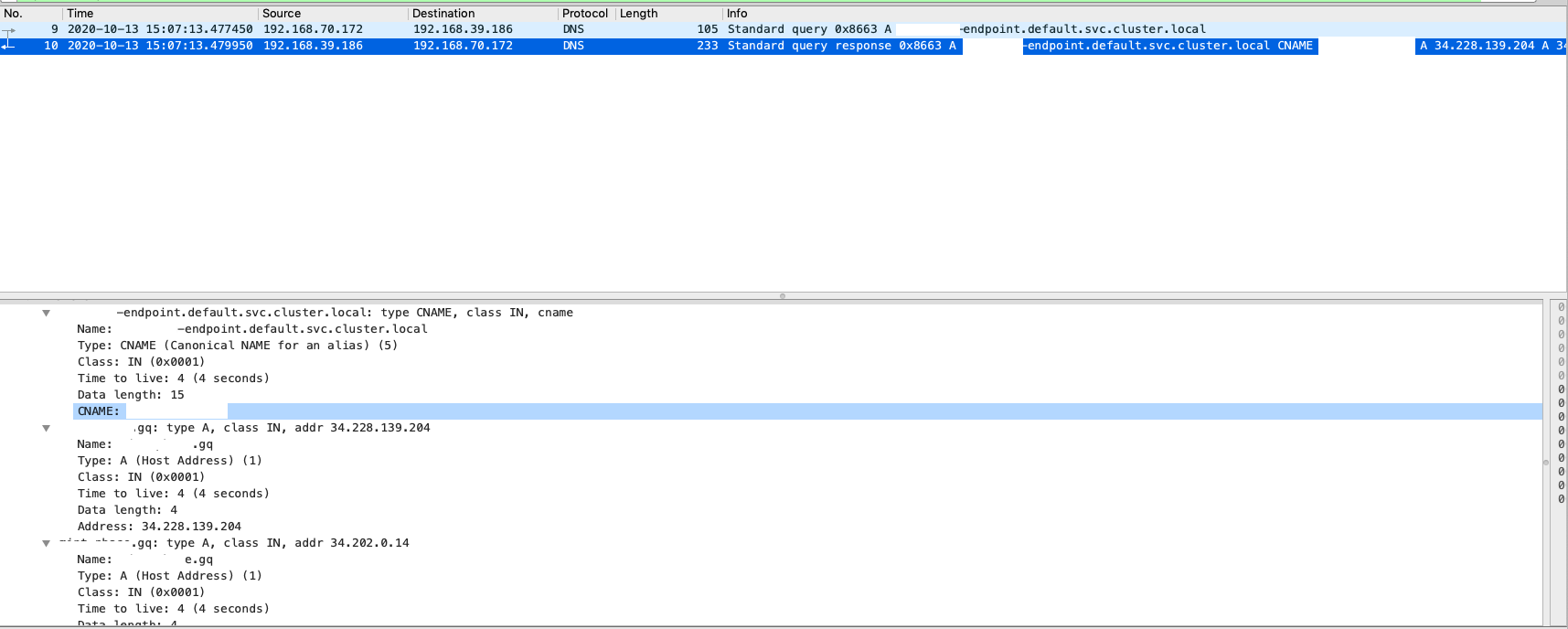

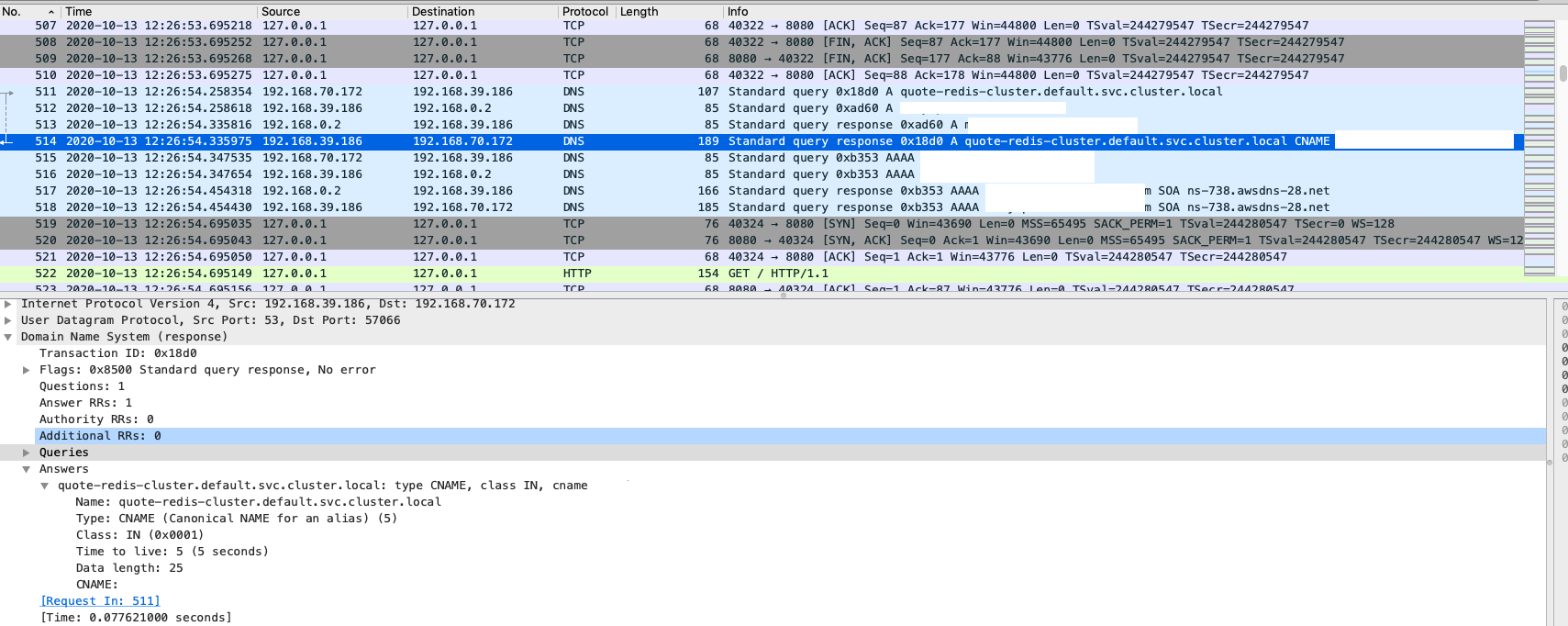

1) On the client side, I was collecting packet and only can see the response with CNAME record, as we expected on nslookup output:

2) On the CoreDNS node, I also collected the packet and can see:

- The CoreDNS Pod was asking the record with AmazonProvidedDNS

- In the response, it only have CNAME record

192.168.70.172 -> 192.168.39.186 (Client query A quote-redis-cluster.default.svc.cluster.local to CoreDNS)

192.168.39.186 -> 192.168.0.2 (CoreDNS query A quote-redis-cluster.default.svc.cluster.local to AmazonProvidedDNS)

192.168.0.2 -> 192.168.39.186 (AmazonProvidedDNS response the record, with CNAME)

192.168.39.186 -> 192.168.70.172 (CoreDNS Pod response the CNAME record)

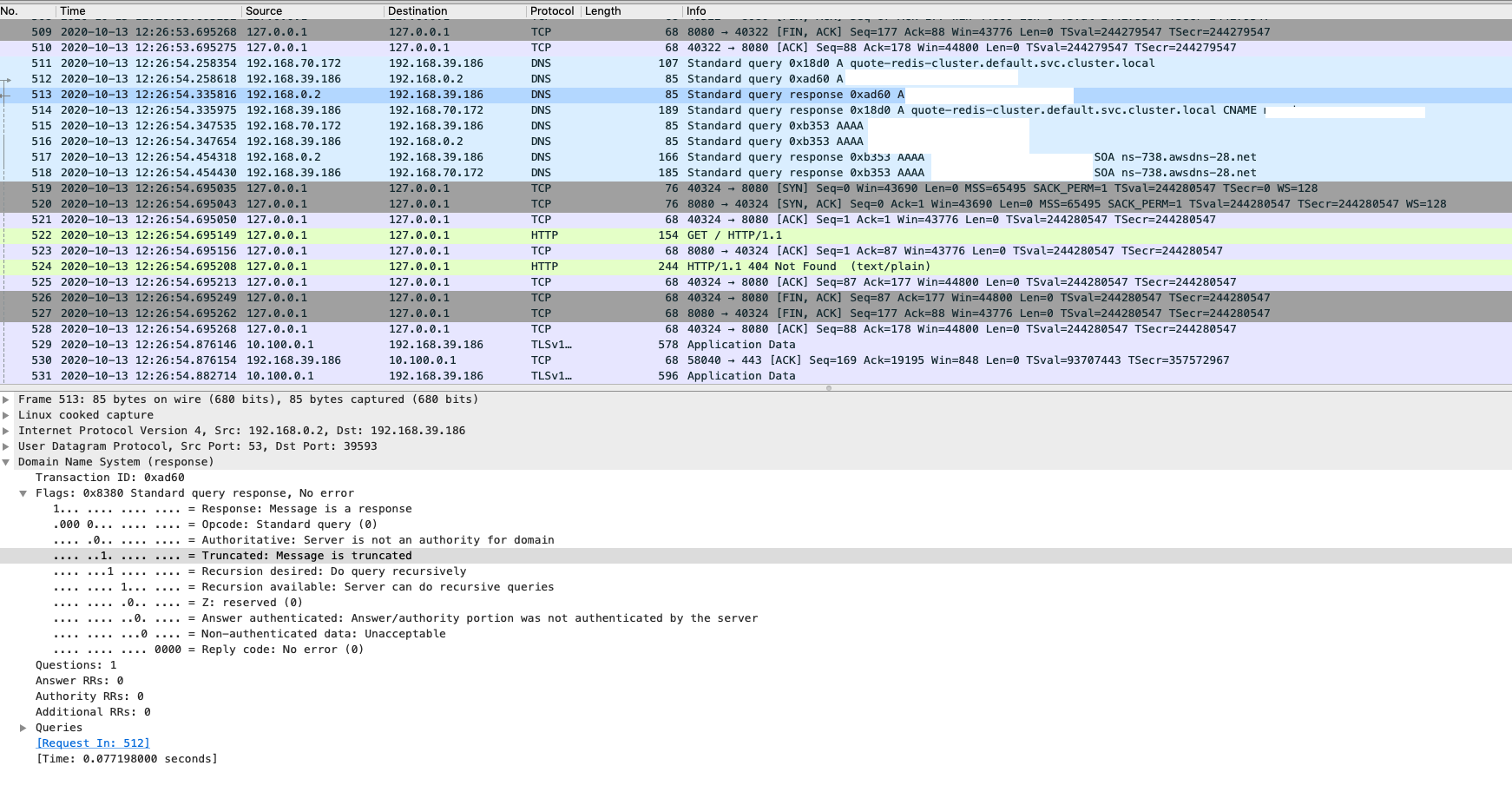

And if look the packet closer collected on CoreDNS node, here is they key point: The truncated flag (TC flag) was true, which means the DNS response is truncated.

At this stage, we can identify that the DNS response was truncated.

Why the DNS response (message) was truncated?

When you first see message truncation, you might think the upstream DNS resolver dropped part of the payload or behaved incorrectly. In reality, this is expected behavior based on the original design of DNS.

DNS primarily uses the User Datagram Protocol (UDP) on port number 53 to serve requests. Queries generally consist of a single UDP request from the client followed by a single UDP reply from the server. — wikipedia

Traditionally, a UDP DNS response was limited to 512 bytes. That worked well in the early days of the Internet, but over time DNS responses began to carry more information and the original limitation became harder to live with. According to RFC#1123, resolvers and name servers should support TCP as a fallback when a single DNS payload exceeds the UDP limit:

It is also clear that some new DNS record types defined in the future will contain information exceeding the 512 byte limit that applies to UDP, and hence will require TCP. Thus, resolvers and name servers should implement TCP services as a backup to UDP today, with the knowledge that they will require the TCP service in the future.

Later, RFC#5966 further described DNS transport over TCP. In practice, the usual options are either to use EDNS0 or to retransmit the query over TCP after the truncation flag (TC) is set:

In the absence of EDNS0 (Extension Mechanisms for DNS 0), the normal behaviour of any DNS server needing to send a UDP response that would exceed the 512-byte limit is for the server to truncate the response so that it fits within that limit and then set the TC flag in the response header.

When the client receives such a response, it takes the TC flag as an indication that it should retry over TCP instead.

Therefore, when an answer exceeds 512 bytes, the upstream server should set the TC flag to indicate that the response was truncated. If both the client and the server support EDNS0, they can exchange larger UDP packets by advertising the supported payload size in the additional section. Otherwise, the client is expected to retry the query over TCP. TCP is also used for operations such as zone transfers, and some resolver implementations may use TCP more broadly.

How to remedy the issue?

The symptom usually appears when Pods resolve a DNS record over UDP through CoreDNS and the response payload exceeds 512 bytes. In that case, it is expected that the response will be truncated and returned with the TC flag set.

However, when CoreDNS receives a truncated response with the TC flag set, the default behavior in this EKS example is not to retry the query over TCP automatically. Instead, CoreDNS forwards the truncated response back to the client, which then sees an incomplete DNS answer.

Therefore, the following solutions can be considered:

Before changing the behavior of CoreDNS, it is useful to classify the failure first:

- If the response is clearly truncated (

TCflag is set), then EDNS0 or TCP fallback are both valid directions. - If there is no truncation and instead you see timeout, SERVFAIL, packet loss, or intermittent failures, then the investigation target should shift to networking, upstream resolver health, security group/NACL, conntrack, or other DNS forwarding components.

- If your DNS path includes more than

Pod -> CoreDNS -> AmazonProvidedDNS, such as Route 53 Resolver outbound endpoints, custom DNS appliances, or on-premises resolvers, you should evaluate the whole forwarding chain before enabling more TCP retries.

Solution 1: Using EDNS0

In many common use cases, the default 512 bytes limit is enough because DNS responses usually do not need to carry many IP addresses. However, the limit can be reached in scenarios such as:

- The record returns many targets because DNS is being used for round-robin load distribution.

- The target is an ElastiCache endpoint with many nodes.

- Other use cases may also produce unusually large DNS responses.

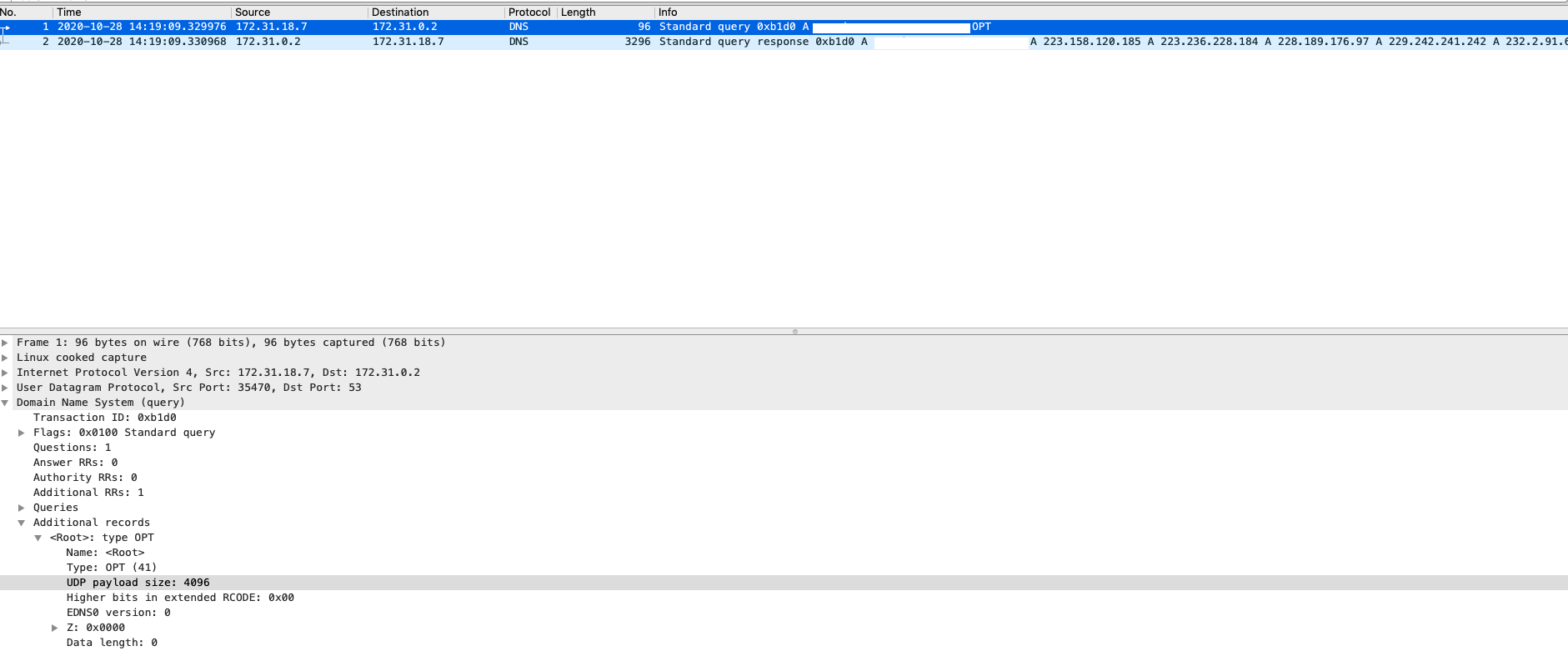

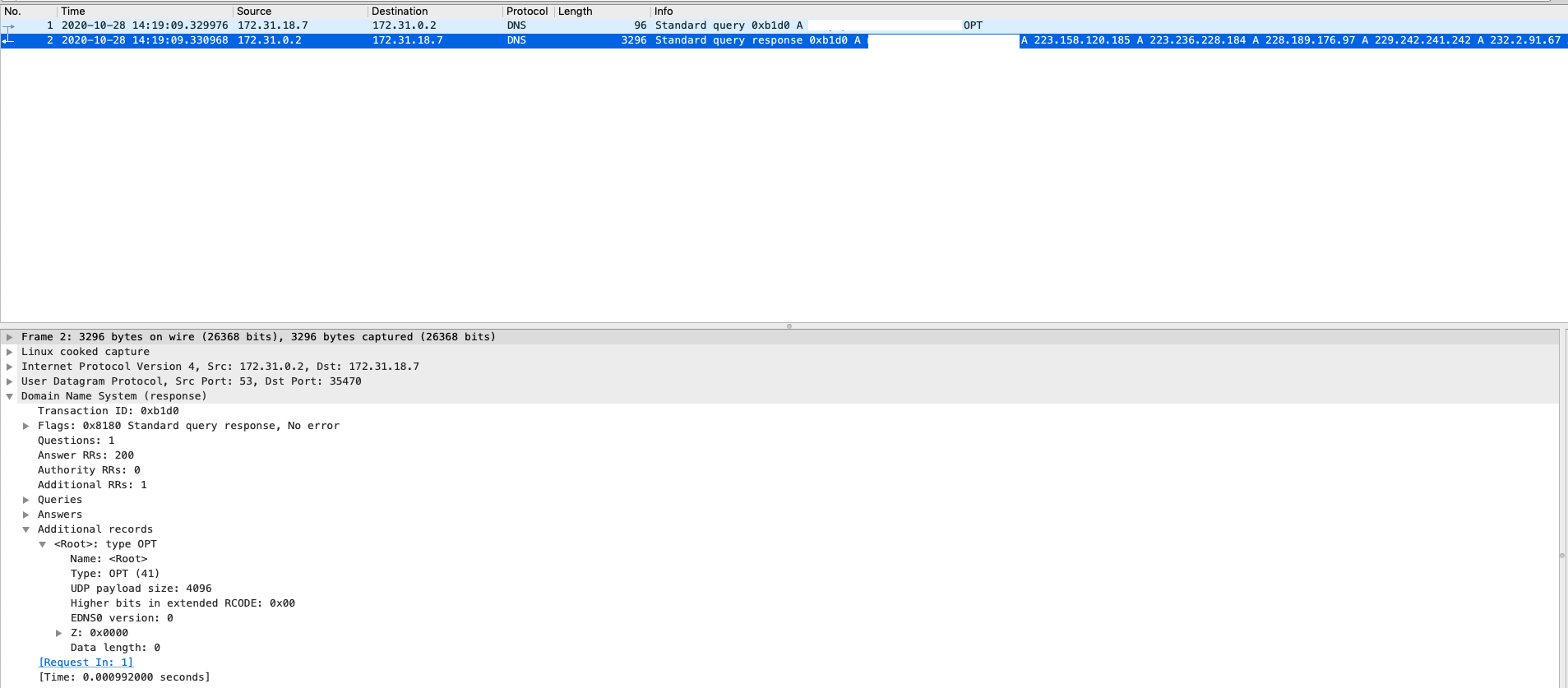

If you expect larger DNS responses, the client can send EDNS0 information to advertise a larger UDP buffer size. This allows the server and intermediate resolvers such as CoreDNS to exchange larger DNS answers over UDP.

This is usually the first option to consider when:

- The issue is limited to a subset of records with large answers.

- The application or DNS client library already supports EDNS0.

- You would like to keep using UDP and avoid extra TCP handshake overhead.

One possible workflow is:

- 1) Resolve the domain by querying

CNAME - 2) Once you get the mapped domain name, send another

Arecord query with theEDNSoption

(You can implement this logic in your own application. There are many different ways to enable EDNS, so please refer to the documentation for your programming language or DNS library.)

Here is an example of the output you can see when using dig:

$ dig -t CNAME my-svc.default.cluster.local

... (Getting canonical name of the record, e.g. example.com) ...

$ dig example.com

;; Got answer:

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 51739

;; flags: qr rd ra; QUERY: 1, ANSWER: 22, AUTHORITY: 0, ADDITIONAL: 1

;; OPT PSEUDOSECTION:

; EDNS: version: 0, flags:; udp: 4096

; COOKIE: 7fb5776972bf2aa4 (echoed)

;; QUESTION SECTION:

; example.com. IN A

;; ANSWER SECTION:

example.com. 15 IN A 10.4.85.47

example.com. 15 IN A 10.4.83.252

example.com. 15 IN A 10.4.82.121

...

example.com. 15 IN A 10.4.82.186

In the output, you can see that dig adds EDNS0 by default and advertises a larger UDP payload size, which is 4096 bytes in this case:

;; OPT PSEUDOSECTION:

; EDNS: version: 0, flags:; udp: 4096

Below is a sample packet using dig with EDNS:

This method usually needs to be implemented in the application. One important clarification here is that the bufsize plugin is not used to increase the EDNS0 buffer size advertised by the client. Instead, it is used to limit or reduce the UDP payload size to help prevent fragmentation.

Here is an example that limits the buffer size of outgoing queries to the resolver (172.31.0.10):

. {

bufsize 512

forward . 172.31.0.10

}

Therefore, if your goal is to make larger UDP answers succeed, bufsize is not the knob to enlarge responses. It is a defensive control to reduce fragmentation risk. Please refer to the CoreDNS documentation to get more detail.

Solution 2: Having TCP retransmit when the DNS query was truncated

As mentioned, the normal behavior of any DNS server that needs to send a UDP response exceeding the 512-byte limit is to truncate the response so that it fits within that limit and then set the TC flag in the response header. When the client receives such a response, it should treat the TC flag as an indication to retry over TCP.

Based on the packet flow analysis, we can confirm that CoreDNS does not automatically retransmit the query over TCP after receiving a truncated response in this scenario. So the next question is: can we configure CoreDNS to retry over TCP when truncation occurs?

The answer is yes.

Since 1.6.2, CoreDNS has supported the prefer_udp option to handle truncated responses when forwarding DNS queries:

The prefer_udp option is described as follows:

Try first using UDP even when the request comes in over TCP. If response is truncated (TC flag set in response) then do another attempt over TCP. In case if both force_tcp and prefer_udp options specified the force_tcp takes precedence.

Here is an example to add the option for forward plugin:

forward . /etc/resolv.conf {

prefer_udp

}

This option can be added when you confirm that CoreDNS is receiving truncated responses but not retrying them over TCP. However, this should not be treated as a universal recommendation for every environment.

TCP fallback is usually a good fit when:

- The problem is confirmed to be caused by truncation.

- The number of affected queries is relatively small.

- The upstream DNS path can comfortably absorb extra TCP sessions and latency.

You should evaluate carefully before broadly preferring TCP when:

- Your workloads are latency sensitive and even small extra milliseconds matter.

- The query volume is high.

- Your upstream chain includes additional DNS forwarding layers, such as Route 53 Resolver outbound endpoints, network appliances, or on-premises resolvers.

In those environments, sending more DNS queries over TCP can introduce another bottleneck because the protocol in use, query response time, round-trip latency, and connection tracking all affect effective resolver capacity. In other words, TCP fallback may solve truncation but still worsen throughput or tail latency somewhere else in the DNS path.

Additional consideration for hybrid DNS and Route 53 Resolver

In simple EKS deployments, the DNS flow is often close to:

Pod -> CoreDNS -> AmazonProvidedDNS

But in enterprise environments it can instead look like:

Pod -> CoreDNS -> AmazonProvidedDNS / Route 53 Resolver -> outbound endpoint -> custom DNS / on-premises DNS

That difference matters. If you enable more TCP-based retries in CoreDNS, you are not only changing Pod-to-CoreDNS behavior, you may also increase pressure on every upstream component in the forwarding chain.

For example, Route 53 Resolver endpoint capacity is documented primarily in queries per second, and AWS explicitly notes that effective capacity varies with response size, response time, round-trip latency, connection tracking, and the protocol in use. Because of that, a design that behaves well with UDP can still hit a limit earlier after moving more traffic to TCP.

As a practical rule, if your environment uses hybrid DNS forwarding:

- Confirm the truncation first, instead of assuming every failed lookup should switch to TCP.

- Consider whether enabling EDNS0 on the requester is enough for the affected records.

- Review the design of the DNS record itself if the answer set is very large.

- Monitor the upstream resolver or Route 53 Resolver endpoint capacity before and after the change.

So, if I need to summarize the recommendation in one sentence: use TCP fallback when it addresses a confirmed truncation problem and your upstream chain can handle it, but do not treat it as the default answer for all CoreDNS-related DNS failures.

Conclusion

This article takes a detailed look at a CoreDNS-related DNS resolution issue on Amazon EKS by inspecting the packet flow. The root cause is that the DNS response is truncated because the response payload exceeds 512 bytes, which prevents the client Pod from receiving the full set of IP addresses. In this case, the response is returned with the TC flag set.

This issue is closely related to the way DNS over UDP was originally designed, and truncation is expected when the payload becomes too large. As mentioned in RFC#1123, the requester is expected to handle the situation when the TC flag is set. This article walks through the behavior in detail based on packet analysis.

To remedy the problem, this article also covers several methods that can be applied on the client side or in CoreDNS, including EDNS0 and TCP retransmission. The key point is that one method is not always better than the other. EDNS0 is often the better first choice when you only need larger UDP answers for a subset of lookups, while TCP fallback is useful when truncation is confirmed and the upstream DNS path can safely absorb the additional TCP cost.

If your environment contains hybrid DNS forwarding, Route 53 Resolver endpoints, or on-premises DNS infrastructure, you should validate the end-to-end path carefully because solving truncation at CoreDNS may still expose throughput or latency limits in upstream resolvers.

References

Share on

Twitter Facebook LinkedInIs that useful? Let me know or buy me a coffee

一次性支持 (ECPay)

一次性支持 (ECPay)

Leave a comment